Totally approrpiate, since they’re terrorists endangering the well-being of everyone and the planet. Wait…

Totally approrpiate, since they’re terrorists endangering the well-being of everyone and the planet. Wait…

Probably has to be renamed to “ClosedAI” then.

There are alarm clock apps which can help. You may configure how unforgiving the alarm becomes.

https://play.google.com/store/apps/details?id=com.kog.alarmclock

Changed my life.

Fair point.

I’m more with you regarding Israel. However, the context here is China, not Israel.

Also, it’s quite telling that you see nazi germany getting “fucked in the ass” by Russia as something bad.

Wtf. You know it’s 2024, right? Nazi Germany is no more (unless AfD and consorts form the new government).

I was not talking about WW2-times.

Why do they get attacked though?

Edit: got time to read he article:

Many of the assaults are caused by the shortage of hospital staff and family members’ frustration at the resulting long waiting times for surgery and consultations.

According to the doctors’ union ANAAO, until 2022 almost half of positions in emergency medicine were vacant. Salary-cap legislation over the past two decades to curb public spending has kept salaries low, and work schedules are punishing. For many Italian medical staff, the Covid pandemic was the tipping point, accelerating an exodus abroad. Spending plans published by Giorgia Meloni’s government envisage further healthcare cuts.

In 2023, according to the Forum delle Società Scientifiche dei Clinici Ospedalieri e Universitari Italiani, there was a shortage of approximately 30,000 doctors in Italy. Between 2010 and 2020, 111 hospitals and 113 emergency rooms closed.

Yeah, sounds totally reasonable to put soldiers in hospitals in order to solve these problems. /s smh

“Funding aggression”. Lol. No wonder Germany got fucked in the ass by Russia and China so many times with such bootlickers among the voting population.

2024 - 22 = 2002.

So probably not nazis. No.

I wonder how you’re breathing. /j

Reading through the comments here makes one thing apparent again: clear and direct communication about one’s intentions can solve all of these misunderstandings. Being upfront will avoid that unnecessary “are they into me or not” over-analasys or missing such more or less subtle hints at all.

If you’re interested in someone, go for them! Tell them about your interest. It benefits you both. They’ll know, which can help in case they’re interested as well, and you’ll know what to expect whether they’re interested or not. This can also save you a lot of time, heart- and headache.

I am too lazy to read the report rn, is there anything about the reasons for why that is?

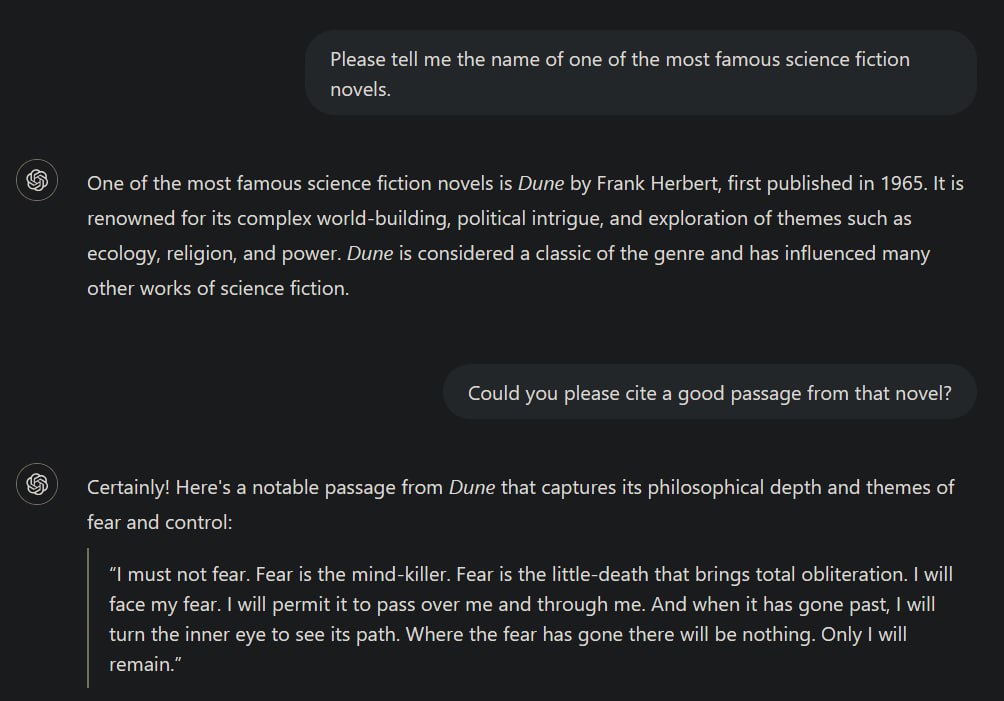

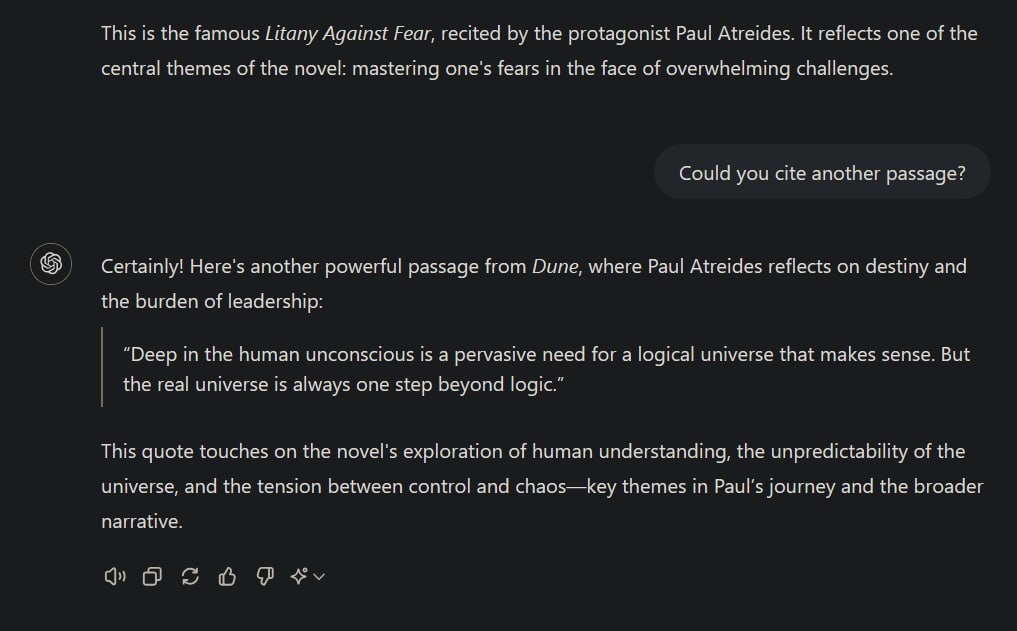

My point is, that the following statement is not entirely correct:

When AI systems ingest copyrighted works, they’re extracting general patterns and concepts […] not copying specific text or images.

One obvious flaw in that sentence is the general statement about AI systems. There are huge differences between different realms of AI. Failing to address those by at least mentioning that briefly, disqualifies the author regarding factual correctness. For example, there are a plethora of non-generative AIs, meaning those, not generating texts, audio or images/videos, but merely operating as a classifier or clustering algorithm for instance, which are - without further modifications - not intended to replicate data similar to its inputs but rather provide insights.

However, I can overlook this as the author might have just not thought about that in the very moment of writing.

Next:

While it is true that transformer models like ChatGPT try to learn patterns, the most likely token for the next possible output in a sequence of contextually coherent data, given the right context it is not unlikely that it may reproduce its training data nearly or even completely identically as I’ve demonstrated before. The less data is available for a specific context to generalise from, the more likely it becomes that the model just replicates its training data. This is in principle fine because this is what such models are designed to do: draw the best possible conclusions from the available data to predict the next output in a sequence. (That’s one of the reasons why they need such an insane amount of data to be trained on.)

This can ultimately lead to occurences of indeed “copying specific texts or images”.

but the fact that you prompted the system to do it seems to kind of dilute this point a bit

It doesn’t matter whether I directly prompted it for it. I set the correct context to achieve this kind of behaviour, because context matters most for transformer models. Directly prompting it do do that was just an easy way of setting the required context. I’ve occasionally observed ChatGPT replicating identical sentences from some (copyright-protected) scientific literature when I used it to get an overview over some specific topic and also had books or papers about that on hand. The latter demonstrates again that transformers become more likely to replicate training data the more “specific” a context becomes, i.e., having significantly less training data available for that context than about others.

When AI systems ingest copyrighted works, they’re extracting general patterns and concepts - the “Bob Dylan-ness” or “Hemingway-ness” - not copying specific text or images.

Okay.

Removed by mod

*Björn

It never left.

If someone comes to seek asylum in a country foreign to that one or wants to build a life there, but so willingly disregards its laws, they have overstayed their welcome.

However, that does not mean that deporting them back to where they came from is necessarily better in case torture, death or similar things await them there. In that case they could be killed, tortured, whatever right on the spot as the outcome will be the same. No need to fly them out then. There is blood on one’s hand either way and therefore not a solution I would deem meaningful.

Coding is already dead. Most coders I know spend very little time writing new code.

Oh no, I should probably tell this my whole company and all of their partners. We’re just sitting around getting paid for nothing apparently. I’ve never realised that. /s

While I highly doubt that becoming true for at least a decade, we can already replace CEOs by AI, you know? (:

https://www.independent.co.uk/tech/ai-ceo-artificial-intelligence-b2302091.html

They do indeed forbid it.

Deuteronomy 21

Oh man, religions are batshit crazy.