Snowden added, “As someone who wants to sweep away corruption, there’s a lot to like in the new ShamWow. And for those really tough, dirty stains, there’s OXYCLEAN!!! With ShamWow and Oxyclean, you don’t need to be Rushin’!”

I’ll wait for the Julian Assange review.

That headline is so stupid that I refuse to read the article

What the fuck is wrong with this timeline.

Do the amish accept atheists?

The real question is whether they accept hypersexual autists.

EDIT: … unable to exist without Tao Te Ching, thyme tea and a few good soundtracks.

But what does Ja Rule think?

Who cares what Ja Rule thinks? I’m holding out for Busta Rhymes.

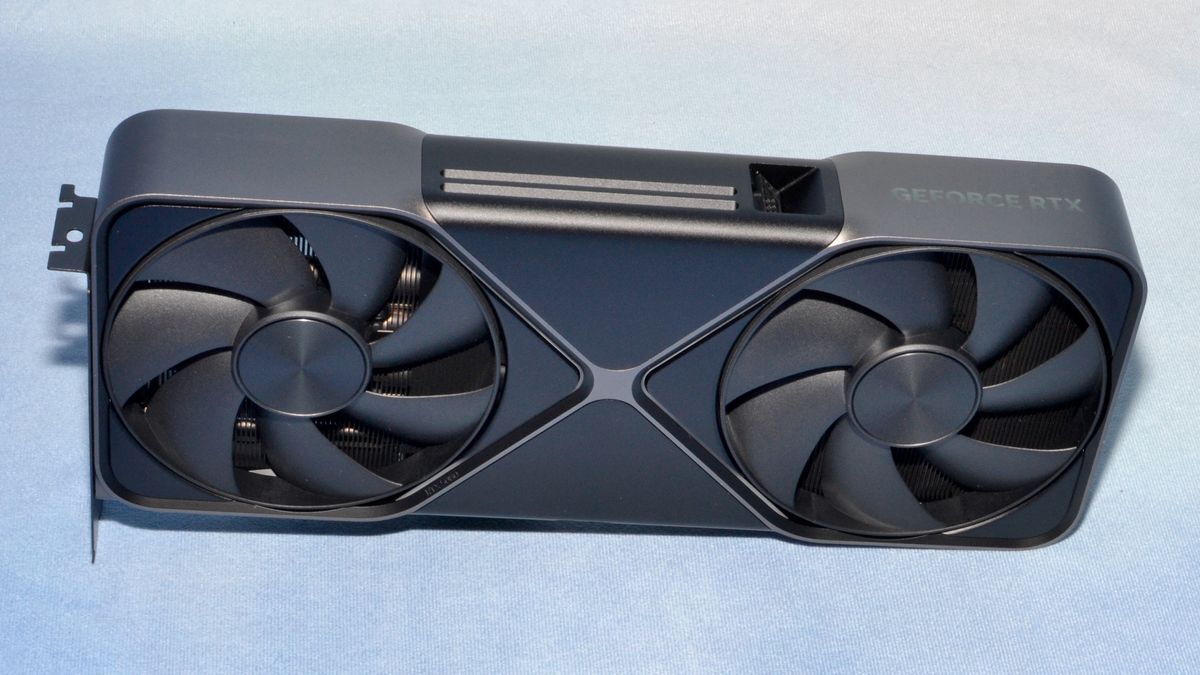

I can’t see the context because it’s freaking X, but I bet that’s in reference to local ML hosting.

There’s a big movement to get away from corporate AI, and I don’t need to explain the importance of that to the Lemmy crowd. But Nvidia is indeed artificially crippling consumer VRAM to stop them from being used for that too much, and protect their enterprise GPU market.

The most bizzare thing is that AMD is inexplicably complicit even though they have like zero market share in that space. 48GB 7900s (and so on) would have obliterated Nvidia and sold like hotcakes, much less actually using their modular memory controller architecture… But no? They restricted their OEMs from doing that because they… Don’t want money, I guess.

AMD is much of a scum as Nvidia is or Intel was, that’s why DeepSeek is something that came from China and you would need a new player completely outside of the current chain.

I don’t see how that’s relevant, Deepseek was trained on, and is served on, Nvidia hardware.

And while I don’t disagree about AMD gouging, if AMD was to act like “scum,” they would screw over Nvidia’s pricing strategy.

But… they don’t. And lose for it.

It’s some combination of ignorance, corporate stupidity and straight up collusion, but it’s also the opposite of greed.

DeepSeek is just a recent example to the usual Microsoft or Google or Apple aka Nvidia-AMD and now at most Intel. This is not about which GPU can run what.

Deepseek is like an ant compared to OpenAI/Anthropic/Google, and they come from a completely different world, the actually competitive open LLM dev scene with dozens of companies publishing good models. I think this is a bad analogy, as AMD is not that small and new compared to Nvidia.

“Whistleblows”. What a moronic take, in this regard taking the word of Edward Snowden is like taking the word of a random stranger in the street. At least we know on what Edward Snowden is likely spending his days on in Russia: Gaming. Wouldn’t blame him, it’s not like he can freely travel.

An infamous former U.S. National Security Agency (NSA) contractor and whistleblower has unexpectedly shared his opinion on the state of the graphics card market.

Man who did big cool thing once also has opinions on unrelated thing, news at 11.

Edward Snowdon reads a spec sheet

So now we care what Edward Snowden says about vram? We need him to tell us that it should be 24 gigs?

Was this written by AI? The headline word salad contains all the buzzwords.

Edward Snowden PILEDRIVES the Nvidia RTX 50 series into a crowded bitcoin farm

“Trash fuckin cuck card kys”

Don’t that distract you from that fact that in 2025 Edward Snowden threw Nvidia’s RTX 50 series off Hell In A Cell and plummeted 16 ft through an announcer’s table.

Whistleblows on poor performance is actually insane lol

That might’ve made sense if we was working as a contractor for Nvidia and revealed that info prior to launch. Maybe. Well, actually no, but it’s a lot closer than whatever this is.

What is even this ?

Every time I see a headline that contains the word “slams,” I want to slam my head on the table

And the user who posted it. I really wish there was a simple way to block sensational posts from my feed.

Thanks for giving me the idea. BRB, making a keyword filter for the words “slams” and “slammed”.

But then you lose out on a bunch of wrestling posts. And naked wrestling posts.

And nothing of value was lost.

Careful now, you might end up with few news posts

Don’t forget blasted and clapped back.

Kamoulox

Edward Snowden doing GPU reviews? This timeline is becoming weirder every day.

“Whistleblows” as if he’s some kind of NVIDIA insider.

Intel Insider now that would’ve made for great whistleblowing headlines.

Swear next he’s gonna review hentai games

“Some hentai games are good” -Edward Snowden

Note that this is from 2003

I’ll keep believing this is a theonion post

Legitimately thought this was a hard-drive.net post

I bet he just wants a card to self host models and not give companies his data, but the amount of vram is indeed ridiculous.

Exactly, I’m in the same situation now and the 8GB in those cheaper cards don’t even let you run a 13B model. I’m trying to research if I can run a 13B one on a 3060 with 12 GB.

You can. I’m running a 14B deepseek model on mine. It achieves 28 t/s.

Oh nice, that’s faster than I imagined.

You need a pretty large context window to fit all the reasoning, ollama forces 2048 by default and more uses more memory

I also have a 3060, can you detail which framework (sglang, ollama, etc) you are using and how you got that speed? i’m having trouble reaching that level of performance. Thx

Ollama, latest version. I have it setup with Open-WebUI (though that shouldn’t matter). The 14B is around 9GB, which easily fits in the 12GB.

I’m repeating the 28 t/s from memory, but even if I’m wrong it’s easily above 20.

Specifically, I’m running this model: https://ollama.com/library/deepseek-r1:14b-qwen-distill-q4_K_M

Edit: I confirmed I do get 27.9 t/s, using default ollama settings.

Ty. I’ll try ollama with the Q-4-M quantization. I wouldn’t expect to see a difference between ollama and SGlang.

Thanks for the additional information, that helped me to decide to get the 3060 12G instead of the 4060 8G. They have almost the same price but from what I gather when it comes to my use cases the 3060 12G seems to fit better even though it is a generation older. The memory bus is wider and it has more VRAM. Both video editing and the smaller LLMs should be working well enough.

I’m running deepseek-r1:14b on a 12GB rx6700. It just about fits in memory and is pretty fast.

Does he work for Nvidia? Seems out of character for him.